Notes on WebGPU Fundamentals

February 17, 2024•45,828 words

This is a summary of webgpufundamentals.org so all credits to them.

Some of the code is shortened or changed.

I am by far not done reading it yet, so this post will be continuously updated.

WebGPU is meant to be the successor of WebGL which is based on OpenGL, first developed in 1992, and to more efficiently interact with modern GPU hardware.

The API is already available on Chrome and Edge but still marked as experimental.

Basically all it does is run 3 types of functions on the GPU:

Vertex Shader

Fragment Shader

Compute Shader

Initialization, Configuration

Add <canvas> tag to html and this javascript

async function main() {const adapter = await navigator.gpu?.requestAdapter();const device = await adapter?.requestDevice();if (!device) {alert('Your browser does not support WebGPU');return;}const canvas = document.querySelector('canvas');const context = canvas.getContext('webgpu');const presentationFormat = navigator.gpu.getPreferredCanvasFormat();context.configure({device,format: presentationFormat,});}main();

Create shader module with a vertex shader and fragment shader function

const module = device.createShaderModule({label: 'our hardcoded red triangle shaders',code: `@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> @builtin(position) vec4f {let pos = array(vec2f( 0.0, 0.5), // top centervec2f(-0.5, -0.5), // bottom leftvec2f( 0.5, -0.5) // bottom right);return vec4f(pos[vertexIndex], 0.0, 1.0);}@fragment fn fs() -> @location(0) vec4f {return vec4f(1.0, 0.0, 0.0, 1.0);}`,});

Shaders are written in WGSL. (WebGPU shader language)

The parameter vertexIndex, which is a 32-bit unsigned integer, gets its value from the builtin vertex_index,

which is the iteration number.

The vertex function returns a vec4f which is a vector of four 32-bit floating point values which

will be assigned to the position builtin.

Positions need to be returned in clip space where X and Y go from -1.0 to +1.0, regardless of the size of the texture that is drawn to.

The fragment shader function returns a vec4f at location(0)

this means that it'll write to the first render target.

The vec4f values correspond to red, green, blue, and alpha.

When the GPU rasterizes the triangle (draws it with pixels), it will call the fragment shader to find out what color to make each pixel.

The label is optional but best practice, most WebGPU implementations will print an error message that includes the labels of the things related to the error.

Create Render Pipeline

const pipeline = device.createRenderPipeline({label: 'hardcoded red triangle pipeline',layout: 'auto',vertex: {module,entryPoint: 'vs',},fragment: {module,entryPoint: 'fs',targets: [{ format: presentationFormat }],},});

Defines the vertex and fragment shaders to be run by the GPU.

It may also define attributes that reference buffers.

Create Render Pass

const renderPassDescriptor = {label: 'our basic canvas renderPass',colorAttachments: [{// view: <- to be filled out when renderingclearValue: [0.3, 0.3, 0.3, 1], //semi-dark grayloadOp: 'clear',storeOp: 'store',},],};

The array for colorAttachments lists the textures to render and how to treat them.

loadOp specifies to clear the texture to the clear value before drawing.

storeOp tells to store what is drawn, 'discard' would throw it away.

Rendering

function render() {renderPassDescriptor.colorAttachments[0].view =context.getCurrentTexture().createView();const encoder = device.createCommandEncoder({ label: 'our encoder' });const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);pass.draw(3);pass.end();const commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);}render();

First it gets the current texture from the canvas context and sets it as the texture to render to. Then a command encoder (used to create a command buffer) and a render pass encoder for render specific commands is created and a pipeline is set for it. The vertex shader is called 3 times, the render pass is then ended and the encoder finished. Finally, the resulting command buffer is submitted to be executed.

Computations

Basic example for running some computation on the GPU,

doubling some numbers

async function main() {const adapter = await navigator.gpu?.requestAdapter();const device = await adapter?.requestDevice();if (!device) {alert('need a browser that supports WebGPU');return;}const module = device.createShaderModule({label: 'doubling compute module',code: `@group(0) @binding(0) var<storage, read_write> data: array<f32>;@compute @workgroup_size(1) fn computeSomething(@builtin(global_invocation_id) id: vec3<u32>) {let i = id.x;data[i] = data[i] * 2.0;}`,});const pipeline = device.createComputePipeline({label: 'doubling compute pipeline',layout: 'auto',compute: {module,entryPoint: 'computeSomething',},});const input = new Float32Array([1, 3, 5]);// create a buffer on the GPU to hold the computation// input and outputconst workBuffer = device.createBuffer({label: 'work buffer',size: input.byteLength,usage: GPUBufferUsage.STORAGE | GPUBufferUsage.COPY_SRC | GPUBufferUsage.COPY_DST,});// Copy the input data to that bufferdevice.queue.writeBuffer(workBuffer, 0, input);// create a buffer on the GPU to get a copy of the resultsconst resultBuffer = device.createBuffer({label: 'result buffer',size: input.byteLength,usage: GPUBufferUsage.MAP_READ | GPUBufferUsage.COPY_DST,});// Setup a bindGroup to tell the shader which// buffer to use for the computationconst bindGroup = device.createBindGroup({label: 'bindGroup for work buffer',layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: { buffer: workBuffer } },],});// Encode commands to do the computationconst encoder = device.createCommandEncoder({label: 'doubling encoder',});const pass = encoder.beginComputePass({label: 'doubling compute pass',});pass.setPipeline(pipeline);pass.setBindGroup(0, bindGroup);pass.dispatchWorkgroups(input.length);pass.end();// Encode a command to copy the results to a mappable buffer.encoder.copyBufferToBuffer(workBuffer, 0, resultBuffer, 0, resultBuffer.size);// Finish encoding and submit the commandsconst commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);// Read the resultsawait resultBuffer.mapAsync(GPUMapMode.READ);const result = new Float32Array(resultBuffer.getMappedRange().slice());resultBuffer.unmap();console.log('input', input);console.log('result', result);}main();

Since contents of a WebGPU buffer cant directly be read from JavaScript

they have to be "mapped" because the buffer might be in use and might only exist on the GPU.

Buffers that can be mapped in JavaScript cant be used for much else, so theyre an addition to which the values from another buffer are copied to.

The iteration number in compute shaders are 3 dimensional.

This is a simplification of how they work:

function dispatchWorkgroups(width, height, depth) {for (z = 0; z < depth; ++z) {for (y = 0; y < height; ++y) {for (x = 0; x < width; ++x) {const workgroup_id = {x, y, z};dispatchWorkgroup(workgroup_id)}}}}function dispatchWorkgroup(workgroup_id) {// from @workgroup_size in WGSLconst workgroup_size = shaderCode.workgroup_size;const {x: width, y: height, z: depth} = workgroup_size;for (z = 0; z < depth; ++z) {for (y = 0; y < height; ++y) {for (x = 0; x < width; ++x) {const local_invocation_id = {x, y, z};const global_invocation_id =workgroup_id * workgroup_size + local_invocation_id;computeShader(global_invocation_id)}}}}

Canvas Resizing

The <canvas> tag, by default, has a resolution of 300x150 pixels.

Simply changing its width/height wont change the resolution.

const canvasToSizeMap = new WeakMap();const observer = new ResizeObserver(entries => {for (const entry of entries) {canvasToSizeMap.set(entry.target, {width: entry.contentBoxSize[0].inlineSize,height: entry.contentBoxSize[0].blockSize,});}// Get the canvas's current display sizelet { width, height } = canvasToSizeMap.get(canvas) || canvas;// Make sure it's valid for WebGPUwidth = Math.max(1, Math.min(width, device.limits.maxTextureDimension2D));height = Math.max(1, Math.min(height, device.limits.maxTextureDimension2D));// Only if the size is different, set the canvas sizeconst needResize = canvas.width !== width || canvas.height !== height;if (needResize) {canvas.width = canvas.clientWidth * window.devicePixelRatio;canvas.height = canvas.clientHeight * window.devicePixelRatio;}render();});observer.observe(canvas);

canvas.width and canvas.height can also simply be assigned the local variables width and height but I replaced it with a multiplication by window.devicePixelRatio for a sharper image on HiDPI displays.

Extra Checks

A device can be "lost" for many reasons. The user may have run an intensive app and crashed the GPU or check for lost device or disabled WebGPU.

async function start() {if (!navigator.gpu) {alert('This browser does not support WebGPU');return;}const adapter = await navigator.gpu.requestAdapter();if (!adapter) {alert('this browser supports WebGPU but it appears disabled');return;}const device = await adapter?.requestDevice();device.lost.then((info) => {console.error(`WebGPU device was lost: ${info.message}`);// reason will be 'destroyed' if the device was intentionally destroyed.if (info.reason !== 'destroyed') {start();}});main(device);}start();function main(device) {... do webgpu ...}

Inter-Stage Variables

The vertex shader can output extra values at positions and by default interpolate those values between them.

In the shader module:

const module = device.createShaderModule({label: 'hardcoded rgb triangle shaders',code: `struct OurVertexShaderOutput {@builtin(position) position: vec4f,@location(0) color: vec4f,};@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> VertexShaderOutput {let pos = array(vec2f( 0.0, 0.5), // top centervec2f(-0.5, -0.5), // bottom leftvec2f( 0.5, -0.5) // bottom right);var color = array<vec4f, 3>(vec4f(1, 0, 0, 1), // redvec4f(0, 1, 0, 1), // greenvec4f(0, 0, 1, 1), // blue);var vsOutput: VertexShaderOutput;vsOutput.position = vec4f(pos[vertexIndex], 0.0, 1.0);vsOutput.color = color[vertexIndex];return vsOutput;}@fragment fn fs(fsInput: VertexShaderOutput) -> @location(0) vec4f {return fsInput.color;}`,});

First a struct is declared in the vertex shader, which will be like a type/interface for the returned value.

A vec4f "color" is added to the buildtin position.

When returning, an instance of the structure is declared and its properties filled out.

The fragment shader is changed to take one of these structs as an argument.

The connection between vertex shader and fragment shader is by index.

For inter-stage variables, they connect by location index.

Output:

Checkerboard

The following code draws a checkerboard into the triangle

const module = device.createShaderModule({label: 'hardcoded checkerboard triangle shaders',code: `struct VertexShaderOutput {@builtin(position) position: vec4f,};@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> VertexShaderOutput {let pos = array(vec2f( 0.0, 0.5), // top centervec2f(-0.5, -0.5), // bottom leftvec2f( 0.5, -0.5) // bottom right);var vsOutput: OurVertexShaderOutput;vsOutput.position = vec4f(pos[vertexIndex], 0.0, 1.0);return vsOutput;}@fragment fn fs(fsInput: VertexShaderOutput) -> @location(0) vec4f {let black = vec4f(0, 0, 0, 1);let white = vec4f(1, 1, 1, 1);let grid = vec2u(fsInput.position.xy) / 8;let checker = (grid.x + grid.y) % 2 == 1;return select(black, white, checker);}`,});

The fragment shader takes the position input, converts it to a vec2u,

divides the 2 values by 8, which makes a count that increases every 8 pixels,

and then adds the x and y coordinates together, compues modulo 2, and compares the result to 1.

This makes a boolean that is true or false for every other integer.

Finally, it uses the select function which selects one or the other value based on the boolean as condition.

Note: checkerboards and other patterns are more commonly drawn using textures.

If its based on the pixel coordinates its relative to the canvas, not the triangle.

Splitted Modules

Both shaders here are in the same module,

they can also be split into separate modules and work the same

const vsModule = device.createShaderModule({label: 'hardcoded triangle',code: `struct OurVertexShaderOutput {@builtin(position) position: vec4f,};@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> OurVertexShaderOutput {let pos = array(vec2f( 0.0, 0.5), // top centervec2f(-0.5, -0.5), // bottom leftvec2f( 0.5, -0.5) // bottom right);var vsOutput: OurVertexShaderOutput;vsOutput.position = vec4f(pos[vertexIndex], 0.0, 1.0);return vsOutput;}`,});const fsModule = device.createShaderModule({label: 'checkerboard',code: `@fragment fn fs(@builtin(position) pixelPosition: vec4f) -> @location(0) vec4f {let red = vec4f(1, 0, 0, 1);let cyan = vec4f(0, 1, 1, 1);let grid = vec2u(pixelPosition.xy) / 8;let checker = (grid.x + grid.y) % 2 == 1;return select(red, cyan, checker);}`,});const pipeline = device.createRenderPipeline({label: 'hardcoded checkerboard triangle pipeline',layout: 'auto',vertex: {module: vsModule,entryPoint: 'vs',},fragment: {module: fsModule,entryPoint: 'fs',targets: [{ format: presentationFormat }],},});

Interpolation Settings

There are 2 types of settings:

Type

perspective: values are interpolated in a perspective correct manner (default)linear: in a linear, non-perspective correct mannerflat: not interpolated

Sampling

center: performed at the center of the pixel (default)centroid: performed at the point that lies within all the samples covered by the fragment within the current primitivesample: performed per sample, fragment shader is invoked once per sample

These attributes can be specified like this

@location(2) @interpolate(linear, center) myVariableFoo: vec4f;@location(3) @interpolate(flat) myVariableBar: vec4f;

Note: if the inter-stage variable is an integer type then the interpolation must be set to flat.

Memory Layout

In WGSL v1, there are 4 base types

f32(a 32bit floating point number)i32(a 32bit integer)u32(a 32bit unsigned integer)f16(a 16bit floating point number)

A byte is 8 bits so a 32 bit value takes 4 bytes and a 16 bit value takes 2 bytes.

For example this struct

struct ExampleStruct {velocity: f32,acceleration: f32,frameCount: u32,};

would be 12 bytes.

To pass data to a shader it would need to be prepared to match the memory layout of the struct.

const exampleStructData = new ArrayBuffer(12);const exampleStructValuesAsF32 = new Float32Array(exampleStructData);const exampleStructValuesAsU32 = new Uint32Array(ourStructData);

An ArrayBuffer is a chunk of memory,

a view has to be created to take a look at the contents of this memory.

Views can be created in different data types.

The data can then be set in the structure:

exampleStructValuesAsF32[0] = 1.2; //VelocityexampleStructValuesAsU32[2] = 56; //FrameCount

TypedArrays have a constructor that takes various forms. Reference

Following are the properties of TypedArray

length: number of unitsbyteLength: size in bytesbyteOffset: offset in the TypedArray’s ArrayBufferbuffer: the ArrayBuffer this TypedArray is viewing

It also has various methods. For example subarray, which creates a new array of the same buffer from the specified start and end (end is not included).

So having multiple views of the same buffer is also possible

const v1 = new Float32Array(5);const v2 = v1.subarray(3, 5); // view the last 2 floats of v1v2[0] = 123;v2[1] = 456;console.log(v1); // shows 0, 0, 0, 123, 456

Note: the map function of a TypedArray creates a new array or the same type,

mapping into another type can be done with Array.from

const f32d = Array.from(f32a).map(v => `${v} doubled = ${v *2}`);

vec and mat types

Uniforms

Uniforms are like global variables for the shader.

The value can be set before the shader is executed and it'll have those values for every iteration.

const module = device.createShaderModule({label: 'triangle shaders with uniforms',code: `struct TestStruct {color: vec4f,scale: vec2f,offset: vec2f,};@group(0) @binding(0) var<uniform> testStruct: TestStruct; <-----@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> @builtin(position) vec4f {let pos = array(vec2f( 0.0, 0.5), // top centervec2f(-0.5, -0.5), // bottom leftvec2f( 0.5, -0.5) // bottom right);return vec4f(pos[vertexIndex] * testStruct.scale + testStruct.offset, 0.0, 1.0);}@fragment fn fs() -> @location(0) vec4f {return testStruct.color;}`,});

- Next create a buffer on the GPU to hold values for the shaders

and set the flags so it can be used with uniforms and be updated by copying data to it, - also create a

TypedArrayto hold and set the values in JS. - Then fill out two of the values that wont be changing later, the second parameter in

setis at which byteoffset the value is placed. - Then, to tell the shader about the buffer, create a bind group and bind the buffer to the same

@bindingthat is set in the shader. - In the render function, set the remaining one of

uniformValuesand copy them to the buffer on the GPU.

(BesideswriteBufferthere are several other ways to copy data to a buffer) - Finally, set the bind group before drawing.

const uniformBufferSize = 32 //color is 4*4 bytes, scale and offset are 2*4 bytesconst uniformBuffer = device.createBuffer({size: uniformBufferSize,usage: GPUBufferUsage.UNIFORM | GPUBufferUsage.COPY_DST,});const uniformValues = new Float32Array(uniformBufferSize / 4); //creates its own ArrayBuffer with 4 bytes times the amount of the parameter, so divide by 4uniformValues.set([0, 1, 0, 1], 0); //coloruniformValues.set([-0.5, -0.25], 6); //offsetconst bindGroup = device.createBindGroup({layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: { buffer: uniformBuffer }},],});function render() {const aspect = canvas.width / canvas.height;uniformValues.set([0.5 / aspect, 0.5], 4); //scaledevice.queue.writeBuffer(uniformBuffer, 0, uniformValues);...pass.setBindGroup(0, bindGroup);pass.draw(3);...}

Randomize variables

const rand = (min, max) => {return min + Math.random() * (max - min);};const numObjects = 100;const objectInfos = [];for (let i = 0; i < numObjects; ++i) {const uniformBuffer = device.createBuffer({label: `uniforms for obj: ${i}`,size: 32,usage: GPUBufferUsage.UNIFORM | GPUBufferUsage.COPY_DST,});const uniformValues = new Float32Array(uniformBufferSize / 4);uniformValues.set([rand(), rand(), rand(), 1], 0); //coloruniformValues.set([rand(-0.9, 0.9), rand(-0.9, 0.9)], 6); //offsetconst bindGroup = device.createBindGroup({label: `bind group for obj: ${i}`,layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: { buffer: uniformBuffer }},],});objectInfos.push({scale: rand(0.2, 0.7),uniformBuffer,uniformValues,bindGroup,});}function render() {renderPassDescriptor.colorAttachments[0].view =context.getCurrentTexture().createView();const encoder = device.createCommandEncoder();const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);const aspect = canvas.width / canvas.height;for (const {scale, bindGroup, uniformBuffer, uniformValues} of objectInfos) {uniformValues.set([scale / aspect, scale], 4); //scaledevice.queue.writeBuffer(uniformBuffer, 0, uniformValues);pass.setBindGroup(0, bindGroup);pass.draw(3);}pass.end();const commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);}

Split Uniforms

const module = device.createShaderModule({code: `struct ObjStruct {color: vec4f,offset: vec2f,};struct ObjScaleStruct {scale: vec2f,};@group(0) @binding(0) var<uniform> objStruct: ObjStruct;@group(0) @binding(1) var<uniform> objScaleStruct: ObjScaleStruct;@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> @builtin(position) vec4f {let pos = array(vec2f( 0.0, 0.5), // top centervec2f(-0.5, -0.5), // bottom leftvec2f( 0.5, -0.5) // bottom right);return vec4f(pos[vertexIndex] * objScaleStruct.scale + objStruct.offset, 0.0, 1.0);}@fragment fn fs() -> @location(0) vec4f {return objStruct.color;}`,});const staticUniformBufferSize = 32; //color is 4*4bytes, offset 2*4bytes, and 2*4bytes paddingconst uniformBufferSize = 8; //scale is 2*4bytesconst kNumObjects = 100;const objectInfos = [];for (let i = 0; i < kNumObjects; ++i) {const staticUniformBuffer = device.createBuffer({label: `static uniforms for obj: ${i}`,size: staticUniformBufferSize,usage: GPUBufferUsage.UNIFORM | GPUBufferUsage.COPY_DST,});{const uniformValues = new Float32Array(staticUniformBufferSize / 4);uniformValues.set([rand(), rand(), rand(), 1], 0); //coloruniformValues.set([rand(-0.9, 0.9), rand(-0.9, 0.9)], 4); //offsetdevice.queue.writeBuffer(staticUniformBuffer, 0, uniformValues);}const uniformValues = new Float32Array(uniformBufferSize / 4);const uniformBuffer = device.createBuffer({label: `changing uniforms for obj: ${i}`,size: uniformBufferSize,usage: GPUBufferUsage.UNIFORM | GPUBufferUsage.COPY_DST,});const bindGroup = device.createBindGroup({label: `bind group for obj: ${i}`,layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: { buffer: staticUniformBuffer }},{ binding: 1, resource: { buffer: uniformBuffer }},],});objectInfos.push({scale: rand(0.2, 0.5),uniformBuffer,uniformValues,bindGroup,});}

The render() code doesnt change except for the scale offset in uniformValues which is 0 now.

The bind group for each object contains a reference to both uniform buffers.

When setting the scale now it only updates that instead of all the values.

Storage Buffers

Differences between uniform buffers and storage buffers:

- Uniform buffers can be faster for their typical use-case

- Storage buffers can be much larger than uniform buffers

- min-max size of a uniform buffer is 64k

- min-max size of a storage buffer is 128meg - Storage buffers can be read/write, uniform buffers are read-only

Instancing

Random triangles code changed to use storage buffers and draw all objects in a single draw call.

const rand = (min, max) => {if (min === undefined) {min = 0;max = 1;} else if (max === undefined) {max = min;min = 0;}return min + Math.random() * (max - min);};async function main() {const adapter = await navigator.gpu?.requestAdapter();const device = await adapter?.requestDevice();if (!device) {alert('need a browser that supports WebGPU');return;}// Get a WebGPU context from the canvas and configure itconst canvas = document.querySelector('canvas');const context = canvas.getContext('webgpu');const presentationFormat = navigator.gpu.getPreferredCanvasFormat();context.configure({device,format: presentationFormat,});const module = device.createShaderModule({code: `struct TestStruct {color: vec4f,offset: vec2f,};struct ScaleStruct {scale: vec2f,};struct VSOutput {@builtin(position) position: vec4f,@location(0) color: vec4f,}@group(0) @binding(0) var<storage, read> testStructs: array<TestStruct>;@group(0) @binding(1) var<storage, read> scaleStructs: array<ScaleStruct>;@vertex fn vs(@builtin(vertex_index) vertexIndex : u32,@builtin(instance_index) instanceIndex: u32) -> VSOutput {let pos = array(vec2f( 0.0, 0.5), // top centervec2f(-0.5, -0.5), // bottom leftvec2f( 0.5, -0.5) // bottom right);let scaleStruct = scaleStructs[instanceIndex];let testStruct = testStructs[instanceIndex];var vsOut: VSOutput;vsOut.position = vec4f(pos[vertexIndex] * scaleStruct.scale + testStruct.offset, 0.0, 1.0);vsOut.color = testStruct.color;return vsOut;}@fragment fn fs(vsOut: VSOutput) -> @location(0) vec4f {return vsOut.color;}`,});const pipeline = device.createRenderPipeline({label: 'split storage buffer pipeline',layout: 'auto',vertex: {module,entryPoint: 'vs',},fragment: {module,entryPoint: 'fs',targets: [{ format: presentationFormat }],},});const numObjects = 100;// create 2 storage buffersconst staticStorageUnitSize = 32; //color 4*4 bytes, offset 2*4, buffer 2*4const storageUnitSize = 8; //scale 2*4const staticStorageBufferSize = staticStorageUnitSize * numObjects;const storageBufferSize = storageUnitSize * numObjects;const staticStorageBuffer = device.createBuffer({label: 'static storage for objects',size: staticStorageBufferSize,usage: GPUBufferUsage.STORAGE | GPUBufferUsage.COPY_DST,});const storageBuffer = device.createBuffer({label: 'changing storage for objects',size: storageBufferSize,usage: GPUBufferUsage.STORAGE | GPUBufferUsage.COPY_DST,});const staticStorageValues = new Float32Array(staticStorageBufferSize / 4);const storageValues = new Float32Array(storageBufferSize / 4);// offsets to the various storage values in float32 indicesconst kColorOffset = 0;const kOffsetOffset = 4;const kScaleOffset = 0;const objectInfos = [];for (let i = 0; i < numObjects; ++i) {const staticOffset = i * (staticStorageUnitSize / 4);staticStorageValues.set([rand(), rand(), rand(), 1], staticOffset + kColorOffset); // set the colorstaticStorageValues.set([rand(-0.9, 0.9), rand(-0.9, 0.9)], staticOffset + kOffsetOffset); // set the offsetobjectInfos.push({scale: rand(0.2, 0.5),});}device.queue.writeBuffer(staticStorageBuffer, 0, staticStorageValues);const bindGroup = device.createBindGroup({label: 'bind group for objects',layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: { buffer: staticStorageBuffer }},{ binding: 1, resource: { buffer: storageBuffer }},],});const renderPassDescriptor = {label: 'canvas renderPass',colorAttachments: [{// view: <- to be filled out when renderingclearValue: [0.3, 0.3, 0.3, 1],loadOp: 'clear',storeOp: 'store',},],};function render() {renderPassDescriptor.colorAttachments[0].view =context.getCurrentTexture().createView();const encoder = device.createCommandEncoder();const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);const aspect = canvas.width / canvas.height;objectInfos.forEach(({scale}, ndx) => {const offset = ndx * (storageUnitSize / 4);storageValues.set([scale / aspect, scale], offset + kScaleOffset);});device.queue.writeBuffer(storageBuffer, 0, storageValues);pass.setBindGroup(0, bindGroup);pass.draw(3, kNumObjects); //call the vertex shader 3 times for a number of instancespass.end();device.queue.submit([encoder.finish()]);}const observer = new ResizeObserver(entries => {for (const entry of entries) {const canvas = entry.target;const width = entry.contentBoxSize[0].inlineSize;const height = entry.contentBoxSize[0].blockSize;canvas.width = canvas.clientWidth * window.devicePixelRatio;canvas.height = canvas.clientHeight * window.devicePixelRatio;render();}});observer.observe(canvas);}main();

This is just one of the possible ways to handle drawing alot of instances of the same object.

- The buffer usage flag is set to storage

- The storage variables in the shader code are declared as array of the struct

- A new parameter @builtin(instance_index) is added to the vertex shader,

which gets its value for each instance drawn

- Another struct is created for the output of the vertex shader,

which now also returns a color to pass to the fragment shader

- In the render function, set the scale in storageValues for each

object in objectInfos

and then upload all to the buffer at once

- Finally, call draw 3 times for each instance

Using storage buffers for vertex data

Another use case of storage buffers would be to store vertex data.

This is the previous example changed to draw rings instead:

const rand = (min, max) => {if (min === undefined) {min = 0;max = 1;} else if (max === undefined) {max = min;min = 0;}return min + Math.random() * (max - min);};function createCircleVertices({radius = 1,numSubdivisions = 24,innerRadius = 0,startAngle = 0,endAngle = Math.PI * 2,} = {}) {// 2 triangles per subdivision, 3 verts per tri, 2 values (xy) each.const numVertices = numSubdivisions * 3 * 2;const vertexData = new Float32Array(numSubdivisions * 2 * 3 * 2);let offset = 0;const addVertex = (x, y) => {vertexData[offset++] = x;vertexData[offset++] = y;};for (let i = 0; i < numSubdivisions; ++i) {const angle1 = startAngle + (i + 0) * (endAngle - startAngle) / numSubdivisions;const angle2 = startAngle + (i + 1) * (endAngle - startAngle) / numSubdivisions;// first triangleaddVertex(Math.cos(angle1) * radius, Math.sin(angle1) * radius);addVertex(Math.cos(angle2) * radius, Math.sin(angle2) * radius);addVertex(Math.cos(angle1) * innerRadius, Math.sin(angle1) * innerRadius);// second triangleaddVertex(Math.cos(angle1) * innerRadius, Math.sin(angle1) * innerRadius);addVertex(Math.cos(angle2) * radius, Math.sin(angle2) * radius);addVertex(Math.cos(angle2) * innerRadius, Math.sin(angle2) * innerRadius);}return {vertexData,numVertices,};}async function main() {const adapter = await navigator.gpu?.requestAdapter();const device = await adapter?.requestDevice();if (!device) {alert('need a browser that supports WebGPU');return;}const canvas = document.querySelector('canvas');const context = canvas.getContext('webgpu');const presentationFormat = navigator.gpu.getPreferredCanvasFormat();context.configure({device,format: presentationFormat,});const module = device.createShaderModule({code: `struct OurStruct {color: vec4f,offset: vec2f,};struct OtherStruct {scale: vec2f,};struct Vertex {position: vec2f,};struct VSOutput {@builtin(position) position: vec4f,@location(0) color: vec4f,};@group(0) @binding(0) var<storage, read> ourStructs: array<OurStruct>;@group(0) @binding(1) var<storage, read> otherStructs: array<OtherStruct>;@group(0) @binding(2) var<storage, read> pos: array<Vertex>;@vertex fn vs(@builtin(vertex_index) vertexIndex : u32,@builtin(instance_index) instanceIndex: u32) -> VSOutput {let otherStruct = otherStructs[instanceIndex];let ourStruct = ourStructs[instanceIndex];var vsOut: VSOutput;vsOut.position = vec4f(pos[vertexIndex].position * otherStruct.scale + ourStruct.offset, 0.0, 1.0);vsOut.color = ourStruct.color;return vsOut;}@fragment fn fs(vsOut: VSOutput) -> @location(0) vec4f {return vsOut.color;}`,});const pipeline = device.createRenderPipeline({label: 'storage buffer vertices',layout: 'auto',vertex: {module,entryPoint: 'vs',},fragment: {module,entryPoint: 'fs',targets: [{ format: presentationFormat }],},});const kNumObjects = 100;const objectInfos = [];const staticUnitSize = 32; //color 4*4 bytes, offset 2*4, buffer 2*4const changingUnitSize = 8; //scale 2*4const staticStorageBufferSize = staticUnitSize * kNumObjects;const changingStorageBufferSize = changingUnitSize * kNumObjects;const staticStorageBuffer = device.createBuffer({label: 'static storage for objects',size: staticStorageBufferSize,usage: GPUBufferUsage.STORAGE | GPUBufferUsage.COPY_DST,});const changingStorageBuffer = device.createBuffer({label: 'changing storage for objects',size: changingStorageBufferSize,usage: GPUBufferUsage.STORAGE | GPUBufferUsage.COPY_DST,});// offsets to the various uniform values in float32 indicesconst kColorOffset = 0;const kOffsetOffset = 4;const kScaleOffset = 0;{const staticStorageValues = new Float32Array(staticStorageBufferSize / 4);for (let i = 0; i < kNumObjects; ++i) {const staticOffset = i * (staticUnitSize / 4);staticStorageValues.set([rand(), rand(), rand(), 1], staticOffset + kColorOffset); // set the colorstaticStorageValues.set([rand(-0.9, 0.9), rand(-0.9, 0.9)], staticOffset + kOffsetOffset); // set the offsetobjectInfos.push({scale: rand(0.2, 0.5),});}device.queue.writeBuffer(staticStorageBuffer, 0, staticStorageValues);}// a typed array to be used to update the changingStorageBufferconst storageValues = new Float32Array(changingStorageBufferSize / 4);// setup storage buffer for vertex dataconst { vertexData, numVertices } = createCircleVertices({radius: 0.5,innerRadius: 0.25,});const vertexStorageBuffer = device.createBuffer({label: 'storage buffer vertices',size: vertexData.byteLength,usage: GPUBufferUsage.STORAGE | GPUBufferUsage.COPY_DST,});device.queue.writeBuffer(vertexStorageBuffer, 0, vertexData);const bindGroup = device.createBindGroup({label: 'bind group for objects',layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: { buffer: staticStorageBuffer }},{ binding: 1, resource: { buffer: changingStorageBuffer }},{ binding: 2, resource: { buffer: vertexStorageBuffer }},],});const renderPassDescriptor = {label: 'our basic canvas renderPass',colorAttachments: [{// view: <- to be filled out when renderingclearValue: [0.3, 0.3, 0.3, 1],loadOp: 'clear',storeOp: 'store',},],};function render() {renderPassDescriptor.colorAttachments[0].view =context.getCurrentTexture().createView();const encoder = device.createCommandEncoder();const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);const aspect = canvas.width / canvas.height;objectInfos.forEach(({scale}, ndx) => {const offset = ndx * (changingUnitSize / 4);storageValues.set([scale / aspect, scale], offset + kScaleOffset);});device.queue.writeBuffer(changingStorageBuffer, 0, storageValues);pass.setBindGroup(0, bindGroup);pass.draw(numVertices, kNumObjects);pass.end();device.queue.submit([encoder.finish()]);}const observer = new ResizeObserver(entries => {for (const entry of entries) {const canvas = entry.target;canvas.width = canvas.clientWidth * window.devicePixelRatio;canvas.height = canvas.clientHeight * window.devicePixelRatio;render();}});observer.observe(canvas);}main();

- Another struct and variable is added in the vertex shader,

it replaces the hard-coded array of positions

- Another storage buffer is added for the vertices for a circle

- The buffer is added to the bind group

The ring is drawn from triangles like this

Vertex Buffers

While it is growing in popularity to use storage buffers,

the traditional way to provide vertex data to a vertex shader is via vertex buffers

and attributes.

The difference is that vertex buffers arent accessed directly from the vertex shader.

Instead, WGPU is told what kind of data is in the buffer and how its organized,

it then pulls the data out of the buffer and provides it.

Changes to the random rings example to use a vertex buffer:

const module = device.createShaderModule({code: `struct Vertex {@location(0) position: vec2f,};...@group(0) @binding(0) var<storage, read> testStructs: array<TestStruct>;@group(0) @binding(1) var<storage, read> scaleStructs: array<ScaleStruct>;@vertex fn vs(vert: Vertex,@builtin(instance_index) instanceIndex: u32) -> VSOutput {...var vsOut: VSOutput;vsOut.position = vec4f(vert.position * scaleStruct.scale + testStruct.offset, 0.0, 1.0);vsOut.color = testStruct.color;return vsOut;}...`,});const pipeline = device.createRenderPipeline({label: 'vertex buffer pipeline',layout: 'auto',vertex: {module,entryPoint: 'vs',buffers: [{arrayStride: 2 * 4, // 2 floats, 4 bytes eachattributes: [{shaderLocation: 0, offset: 0, format: 'float32x2'}, // position],}],},...});const vertexBuffer = device.createBuffer({label: 'vertex buffer vertices',size: vertexData.byteLength,usage: GPUBufferUsage.VERTEX | GPUBufferUsage.COPY_DST,});device.queue.writeBuffer(vertexBuffer, 0, vertexData);function render() {pass.setPipeline(pipeline);pass.setVertexBuffer(0, vertexBuffer);...}

- Declares a struct to define the data for a vertex, important is to use @location(0) for the position field

- Adds a buffers array to the vertex entry of the pipeline, describing how to pull data out of 1 or more vertex buffers, arrayStride is how many bytes to get from the data for one vertex in the buffer to the next, the shaderLocation attribute corresponds to location(0)

- Changes the usage of the buffer holding vertex data to VERTEX and remove it from the bind group

- Sets the vertex buffer to use at draw time, the 0 corresponds to the first element of the render pipeline buffers array

The vertex format can be one of the values specified in the GPUVertexFormat definition.

Instancing

Attributes can advance per vertex or per instance.

Advancing per instance would be using @builtin(instance_index)

To accomplish the same thing with vertex buffers:

const module = device.createShaderModule({code: `struct Vertex {@location(0) position: vec2f,@location(1) color: vec4f,@location(2) offset: vec2f,@location(3) scale: vec2f,};struct VSOutput {@builtin(position) position: vec4f,@location(0) color: vec4f,};@vertex fn vs(vert: Vertex,) -> VSOutput {var vsOut: VSOutput;vsOut.position = vec4f(vert.position * vert.scale + vert.offset, 0.0, 1.0);vsOut.color = vert.color;return vsOut;}`,});const pipeline = device.createRenderPipeline({label: 'flat colors',layout: 'auto',vertex: {module,entryPoint: 'vs',buffers: [{arrayStride: 2 * 4, // 2 floats, 4 bytes eachattributes: [{shaderLocation: 0, offset: 0, format: 'float32x2'}, // position],},{arrayStride: 6 * 4, // 6 floats, 4 bytes eachstepMode: 'instance',attributes: [{shaderLocation: 1, offset: 0, format: 'float32x4'}, // color{shaderLocation: 2, offset: 16, format: 'float32x2'}, // offset],},{arrayStride: 2 * 4, // 2 floats, 4 bytes eachstepMode: 'instance',attributes: [{shaderLocation: 3, offset: 0, format: 'float32x2'}, // scale],},],},});const staticUnitSize = 24; //color is 4*4, offset is 2*4const changingUnitSize = 8; //scaleconst staticVertexBufferSize = staticUnitSize * kNumObjects;const changingVertexBufferSize = changingUnitSize * kNumObjects;const staticVertexBuffer = device.createBuffer({label: 'static vertex for objects',size: staticVertexBufferSize,usage: GPUBufferUsage.VERTEX | GPUBufferUsage.COPY_DST,});const changingVertexBuffer = device.createBuffer({label: 'changing vertex for objects',size: changingVertexBufferSize,usage: GPUBufferUsage.VERTEX | GPUBufferUsage.COPY_DST,});

- Changes the shader to only use vertex attributes

- Updates the render pipeline to tell it how to supply data to those attributes, stepMode: 'instance' means the attribute will only advance per instance, the default would be 'vertex'

- Changes the buffers usage to vertex, removes the padding from the staticUnitSize since vertex attributes do not have the same restrictions

- The bind group is no longer needed

- In the render function, set the buffers instead of the bind group

Make rings shaded:

function createCircleVertices({...} = {}) {// 2 triangles per subdivision, 3 verts per tri, 5 values (xyrgb) each.const numVertices = numSubdivisions * 3 * 2;const vertexData = new Float32Array(numVertices * (2 + 3));let offset = 0;const addVertex = (x, y, r, g, b) => {vertexData[offset++] = x;vertexData[offset++] = y;vertexData[offset++] = r;vertexData[offset++] = g;vertexData[offset++] = b;};const innerColor = [1, 1, 1];const outerColor = [0.1, 0.1, 0.1];for (let i = 0; i < numSubdivisions; ++i) {...// first triangleaddVertex(c1 * radius, s1 * radius, ...outerColor);addVertex(c2 * radius, s2 * radius, ...outerColor);addVertex(c1 * innerRadius, s1 * innerRadius, ...innerColor);// second triangleaddVertex(c1 * innerRadius, s1 * innerRadius, ...innerColor);addVertex(c2 * radius, s2 * radius, ...outerColor);addVertex(c2 * innerRadius, s2 * innerRadius, ...innerColor);}...}const module = device.createShaderModule({code: `struct Vertex {@location(0) position: vec2f,@location(1) color: vec4f,@location(2) offset: vec2f,@location(3) scale: vec2f,@location(4) perVertexColor: vec3f,};...@vertex fn vs(vert: Vertex,) -> VSOutput {var vsOut: VSOutput;vsOut.position = vec4f(vert.position * vert.scale + vert.offset, 0.0, 1.0);vsOut.color = vert.color * vec4f(vert.perVertexColor, 1);return vsOut;}...`,});const pipeline = device.createRenderPipeline({label: 'per vertex color',layout: 'auto',vertex: {module,entryPoint: 'vs',buffers: [{arrayStride: 5 * 4, // 5 floats, 4 bytes eachattributes: [{shaderLocation: 0, offset: 0, format: 'float32x2'}, // position{shaderLocation: 4, offset: 8, format: 'float32x3'}, // perVertexColor],},...],},...});

- Changes the circle vertex generation code to provide a dark color for vertices on the outer edge and a light color for the inner vertices

- Adds another attribute for a per vertex color to the shader

- Updates the buffer in the pipeline, interleave perVertexColor with the position attribute

Using normalized values to save space

The space usage could be optimized by using 8bit values for the color which has 4*4 bytes

and normalizing it from 0 ↔ 255 to 0.0 ↔ 1.0

function createCircleVertices({...} = {}) {// 2 triangles per subdivision, 3 verts per triconst numVertices = numSubdivisions * 3 * 2;// 2 32-bit values for position (xy) and 1 32-bit value for color (rgb_)// The 32-bit color value will be written/read as 4 8-bit valuesconst vertexData = new Float32Array(numVertices * (2 + 1));const colorData = new Uint8Array(vertexData.buffer);let offset = 0;let colorOffset = 8;const addVertex = (x, y, r, g, b) => {vertexData[offset++] = x;vertexData[offset++] = y;offset += 1; // skip the colorcolorData[colorOffset++] = r * 255;colorData[colorOffset++] = g * 255;colorData[colorOffset++] = b * 255;colorOffset += 9; // skip extra byte and the position};...}const staticUnitSize = 4 + 2 * 4; // color is 4 bytes, offset is 2*4 bytes...{const staticVertexValuesU8 = new Uint8Array(staticVertexBufferSize);const staticVertexValuesF32 = new Float32Array(staticVertexValuesU8.buffer);for (let i = 0; i < kNumObjects; ++i) {const staticOffsetU8 = i * staticUnitSize;const staticOffsetF32 = staticOffsetU8 / 4;staticVertexValuesU8.set([rand() * 255, rand() * 255, rand() * 255, 255], //colorstaticOffsetU8 + kColorOffset);staticVertexValuesF32.set([rand(-0.9, 0.9), rand(-0.9, 0.9)], //offsetstaticOffsetF32 + kOffsetOffset);objectInfos.push({scale: rand(0.2, 0.5),});}device.queue.writeBuffer(staticVertexBuffer, 0, staticVertexValuesF32);}const pipeline = device.createRenderPipeline({label: 'per vertex color',layout: 'auto',vertex: {module,entryPoint: 'vs',buffers: [{arrayStride: 2 * 4 + 4, // 2 floats, 4 bytes each + 4 bytesattributes: [{shaderLocation: 0, offset: 0, format: 'float32x2'}, // position{shaderLocation: 4, offset: 8, format: 'unorm8x4'}, // perVertexColor],},{arrayStride: 4 + 2 * 4, // 4 bytes + 2 floats, 4 bytes eachstepMode: 'instance',attributes: [{shaderLocation: 1, offset: 0, format: 'unorm8x4'}, // color{shaderLocation: 2, offset: 4, format: 'float32x2'}, // offset],},...],},...});

- Changes the vertex generation function and creates a Uint8Array view of the same data as vertexData ,

then when inserting the colors, expand it from 0 ↔ 1 to 0 ↔ 255

- Updates the per instance data, include 4 bytes for color instead of 4*4 in the staticUnitSize and set the buffer values accordingly to the new sizing

- Changes the pipeline to pull out the data as 8bit unsigned values and normalize them back to 0 ↔ 1

These changes result in 20 bytes per vertex being reduced to 12 and 24 bytes per instance to 12,

a 40% and 50% saving

Index Buffers

They describe the order to process and use the vertices.

Changed createCircleVertices function to reuse vertices when calculating subdivisions:

function createCircleVertices({...} = {}) {// 2 vertices at each subdivision, + 1 to wrap around the circle.const numVertices = (numSubdivisions + 1) * 2;...for (let i = 0; i <= numSubdivisions; ++i) {const angle = startAngle + (i + 0) * (endAngle - startAngle) / numSubdivisions;const c1 = Math.cos(angle);const s1 = Math.sin(angle);addVertex(c1 * radius, s1 * radius, ...outerColor);addVertex(c1 * innerRadius, s1 * innerRadius, ...innerColor);}const indexData = new Uint32Array(numSubdivisions * 6);let ndx = 0;for (let i = 0; i < numSubdivisions; ++i) {const ndxOffset = i * 2;// first triangleindexData[ndx++] = ndxOffset;indexData[ndx++] = ndxOffset + 1;indexData[ndx++] = ndxOffset + 2;// second triangleindexData[ndx++] = ndxOffset + 2;indexData[ndx++] = ndxOffset + 1;indexData[ndx++] = ndxOffset + 3;}return {vertexData,indexData,numVertices: indexData.length,};}const { vertexData, indexData, numVertices } = createCircleVertices({radius: 0.5,innerRadius: 0.25,});const indexBuffer = device.createBuffer({label: 'index buffer',size: indexData.byteLength,usage: GPUBufferUsage.INDEX | GPUBufferUsage.COPY_DST,});device.queue.writeBuffer(indexBuffer, 0, indexData);...pass.setIndexBuffer(indexBuffer, 'uint32');pass.drawIndexed(numVertices, kNumObjects);

Textures

Textures most often represent a 2D image, which is just a 2D array of color values.

What makes them special is that they can be accessed by special hardware called a sampler.

It can read up to 16 different values in a texture and blend them together in a way that is useful for many common use cases.

For example increasing the size of an image and filtering it to look less pixelated.

Modified inter-stage variables example drawing a quad with a texture:

async function main() {const adapter = await navigator.gpu?.requestAdapter();const device = await adapter?.requestDevice();if (!device) {alert('need a browser that supports WebGPU');return;}const canvas = document.querySelector('canvas');const context = canvas.getContext('webgpu');const presentationFormat = navigator.gpu.getPreferredCanvasFormat();context.configure({device,format: presentationFormat,});const module = device.createShaderModule({label: 'hardcoded textured quad shaders',code: `struct VertexShaderOutput {@builtin(position) position: vec4f,@location(0) texcoord: vec2f,};@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> VertexShaderOutput {let pos = array(vec2f( 0.0, 0.0), // centervec2f( 1.0, 0.0), // right, centervec2f( 0.0, 1.0), // center, topvec2f( 0.0, 1.0), // center, topvec2f( 1.0, 0.0), // right, centervec2f( 1.0, 1.0), // right, top);var vsOutput: VertexShaderOutput;let xy = pos[vertexIndex];vsOutput.position = vec4f(xy, 0.0, 1.0);vsOutput.texcoord = xy;return vsOutput;}@group(0) @binding(0) var testSampler: sampler;@group(0) @binding(1) var testTexture: texture_2d<f32>;@fragment fn fs(fsInput: VertexShaderOutput) -> @location(0) vec4f {return textureSample(testTexture, testSampler, fsInput.texcoord);}`,});const pipeline = device.createRenderPipeline({label: 'hardcoded textured quad pipeline',layout: 'auto',vertex: {module,entryPoint: 'vs',},fragment: {module,entryPoint: 'fs',targets: [{ format: presentationFormat }],},});const kTextureWidth = 5;const kTextureHeight = 7;const _ = [255, 0, 0, 255]; // redconst y = [255, 255, 0, 255]; // yellowconst b = [ 0, 0, 255, 255]; // blueconst textureData = new Uint8Array([b, _, _, _, _,_, y, y, y, _,_, y, _, _, _,_, y, y, _, _,_, y, _, _, _,_, y, _, _, _,_, _, _, _, _,].flat());const texture = device.createTexture({label: 'yellow F on red',size: [kTextureWidth, kTextureHeight],format: 'rgba8unorm',usage:GPUTextureUsage.TEXTURE_BINDING |GPUTextureUsage.COPY_DST,});device.queue.writeTexture({ texture },textureData,{ bytesPerRow: kTextureWidth * 4 },{ width: kTextureWidth, height: kTextureHeight },);const sampler = device.createSampler();const bindGroup = device.createBindGroup({layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: sampler },{ binding: 1, resource: texture.createView() },],});const renderPassDescriptor = {label: 'basic canvas renderPass',colorAttachments: [{// view: <- to be filled out when renderingclearValue: [0.3, 0.3, 0.3, 1],loadOp: 'clear',storeOp: 'store',},],};function render() {renderPassDescriptor.colorAttachments[0].view =context.getCurrentTexture().createView();const encoder = device.createCommandEncoder({label: 'render quad encoder',});const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);pass.setBindGroup(0, bindGroup);pass.draw(6);pass.end();const commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);}const observer = new ResizeObserver(entries => {for (const entry of entries) {const canvas = entry.target;canvas.width = canvas.clientWidth * window.devicePixelRatio;canvas.height = canvas.clientHeight * window.devicePixelRatio;render();}});observer.observe(canvas);}main();

- Change the positions in the vertex shader to draw a quad in the top right of the canvas, and change the output to pass texcoord instead of color, which will also be interpolated between the 3 vertices of each triangle

- Creates a 5x7 texel F in an rgba8unorm texture, unorm means unsigned normalized which means the values will be converted from unsigned bytes with values from 0 to 255 to floating point values from 0.0 to 1.0

- Creates a texture, sets its size, format, and sets the usage flag to TEXTURE_BINDING to be able bind it into a bind group, COPY_DST means that data can be copied to it

- Copies data to the texture with device.queue.writeTexture , the third parameter specifies how many bytes to get from one row of the source data to the next row

- Creates a sampler with device.createSampler

- Adds the texture and sampler to a bind group with bindings that match the @binding in the shader

- Update the render function: set the bind group and call the vertex shader 6 times

Note: It's not common to pass position values as texture coordinates but in this case it happens that the texture coordinates needed match the positions.

To fix the texture being upside down, either flip the coordinates in the vertex or fragment shader, or simply flip the textureData

vsOutput.texcoord = vec2f(xy.x, 1.0 - xy.y);

magFilter

Since the previously drawn texture is larger than 5x7 texel, the magFilter is used by the sampler, which magnifies the texture. If the filter was changed from nearest to linear then it would linearly interpolate between 4 pixels.

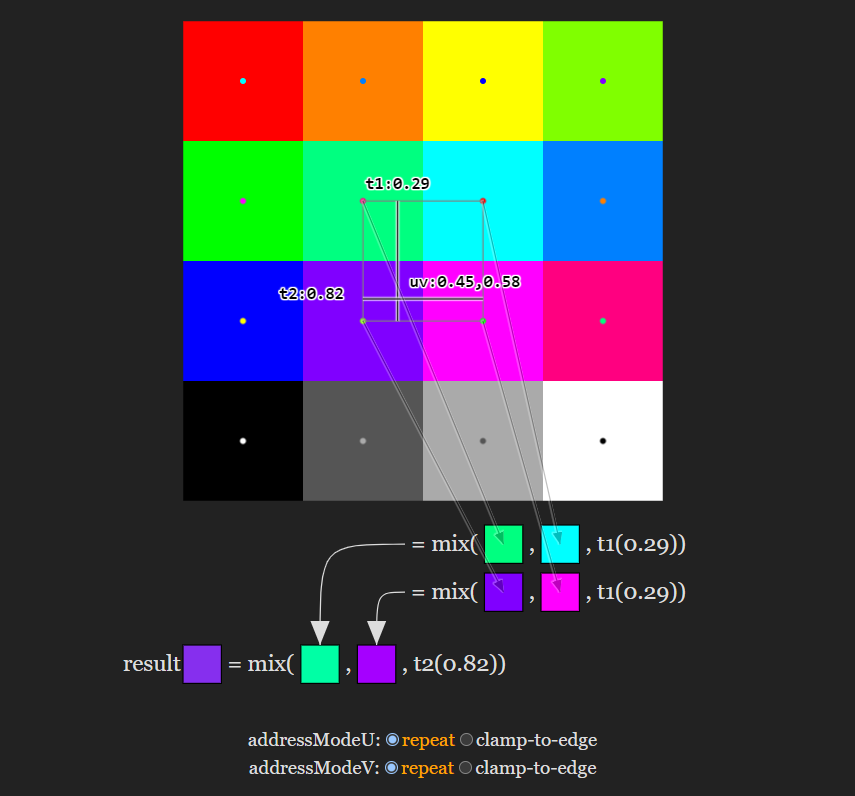

Texture coordinates are often called UVs, for a given uv, the closest 4 pixels are chosen.

t1 is the horizontal distance between the top left chosen pixel's center and the pixel to its right's center.

t2 is the same but vertically.

The sampler settings addressModeU and addressModeV can be set to repeat or clamp-to-edge,

when set to 'repeat' and the coordinate is within half a texel of the edge of the texture it wraps around and blends with pixels on the opposite side,

when set to 'clamp-to-edge', the coordinate is clamped so that it cant go into the half texel on the edge

const sampler = device.createSampler({addressModeU: 'repeat',addressModeV:'repeat',magFilter: 'linear',});

minFilter

The minFilter setting does similar math to magFilter for when the texture is drawn smaller than its size.

Changes to the previous example to create a 2x2 moving quad to show the flickering:

canvas {image-rendering: pixelated;image-rendering: crisp-edges;}// CSS ^const module = device.createShaderModule({label: 'our hardcoded textured quad shaders',code: `struct Uniforms {scale: vec2f,offset: vec2f,};...@group(0) @binding(2) var<uniform> uni: Uniforms;@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> OurVertexShaderOutput {let pos = array(...);var vsOutput: OurVertexShaderOutput;let xy = pos[vertexIndex];vsOutput.position = vec4f(xy * uni.scale + uni.offset, 0.0, 1.0);vsOutput.texcoord = xy;return vsOutput;}...`,});const uniformBufferSize = 16 + // scale is 2*4, offset is 2*4const uniformBuffer = device.createBuffer({label: 'uniforms for quad',size: uniformBufferSize,usage: GPUBufferUsage.UNIFORM | GPUBufferUsage.COPY_DST,});const uniformValues = new Float32Array(uniformBufferSize / 4);const kScaleOffset = 0;const kOffsetOffset = 2;const sampler = device.createSampler({addressModeU: 'repeat',addressModeV:'repeat',magFilter: 'linear',minFilter: 'linear' // <--});const bindGroup = device.createBindGroup({layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: sampler },{ binding: 1, resource: texture.createView() },{ binding: 2, resource: { buffer: uniformBuffer }}, // <--],});...function render(time) {time *= 0.001;const scaleX = 4 / canvas.width;const scaleY = 4 / canvas.height;uniformValues.set([scaleX, scaleY], kScaleOffset);uniformValues.set([Math.sin(time * 0.25) * 0.8, -0.8], kOffsetOffset);device.queue.writeBuffer(uniformBuffer, 0, uniformValues);...requestAnimationFrame(render);}requestAnimationFrame(render);const observer = new ResizeObserver(entries => {for (const entry of entries) {const canvas = entry.target;const width = entry.contentBoxSize[0].inlineSize / 64 | 0;const height = entry.contentBoxSize[0].blockSize / 64 | 0;canvas.width = Math.max(1, Math.min(width, device.limits.maxTextureDimension2D));canvas.height = Math.max(1, Math.min(height, device.limits.maxTextureDimension2D));}});observer.observe(canvas);

- Makes the canvas low-res in the CSS, so it doesnt do the same magFilter: 'linear' effect on it

- Lowers the resolution in the ResizeObserver callback as well

- Adds a uniform variable for scale and offset in the vertex shader

- Creates and adds a uniform buffer to the bind group

- Sets the values in the render function, remove the render call in ResizeObserver and do a requestAnimationFrame loop instead (also called a rAF loop)

A reason for the flickering is that the quad is positioned with real numbers but pixels are integers. This can be solved with "mip-mapping", so pre-blending the colors when resizing. The best algorith for blending the pixels from one mip to the next is a matter of research and opinion. Here would be one in the example above.

But also any data can be put into each mip level using the canvas 2d api, for example:

const createCheckedMipmap = () => {const ctx = document.createElement('canvas').getContext('2d', {willReadFrequently: true});const levels = [{ size: 64, color: 'rgb(128,0,255)', },{ size: 32, color: 'rgb(0,255,0)', },{ size: 16, color: 'rgb(255,0,0)', },{ size: 8, color: 'rgb(255,255,0)', },{ size: 4, color: 'rgb(0,0,255)', },{ size: 2, color: 'rgb(0,255,255)', },{ size: 1, color: 'rgb(255,0,255)', },];return levels.map(({size, color}, i) => {ctx.canvas.width = size;ctx.canvas.height = size;ctx.fillStyle = i & 1 ? '#000' : '#fff';ctx.fillRect(0, 0, size, size);ctx.fillStyle = color;ctx.fillRect(0, 0, size / 2, size / 2);ctx.fillRect(size / 2, size / 2, size / 2, size / 2);return ctx.getImageData(0, 0, size, size);});};

This creates a mipmap with entirely different colors for each level for demonstration.

The texture is then created with that data

const createTextureWithMips = (mips, label) => {const texture = device.createTexture({label,size: [mips[0].width, mips[0].height],mipLevelCount: mips.length,format: 'rgba8unorm',usage:GPUTextureUsage.TEXTURE_BINDING |GPUTextureUsage.COPY_DST,});mips.forEach(({data, width, height}, mipLevel) => {device.queue.writeTexture({ texture, mipLevel },data,{ bytesPerRow: width * 4 },{ width, height },);});return texture;};const textures = [createTextureWithMips(createBlendedMipmap(), 'blended'),createTextureWithMips(createCheckedMipmap(), 'checker'),];

mipmapFilter

The mipmapFilter setting can also be set to nearest or linear.

This chooses if colors are blended between mip levels.

It's most often used when drawing in 3D.

In the example above, all planes on the top are set to nearest and bottom to linear.

Which one to use depends on the style and also speed, nearest is faster.

Texture Types and Views

There are three types of textures: 1d, 2d, and 3d.

Each has its limits, these can be read with device.limits.maxTextureDimension2D for example

There are six types of texture views: 1d, 2d, 2d-array, 3d, cube, cube-array.

If a 2d texture has 6 layers it can have a cube view.

Each type of texture has its corresponding type in WGSL:

| type | WGSL types |

|---|---|

| "1d" | texture_1d or texture_storage_1d |

| "2d" | texture_2d or texture_storage_2d or texture_multisampled_2d as well as a special case for in certain situations texture_depth_2d and texture_depth_multisampled_2d |

| "2d-array" | texture_2d_array or texture_storage_2d_array and sometimes texture_depth_2d_array |

| "3d" | texture_3d or texture_storage_3d |

| "cube" | texture_cube and sometimes texture_depth_cube |

| "cube-array" | texture_cube_array and sometimes texture_depth_cube_array |

Texture Formats

Loading Images into Textures

Example:

async function main() {const adapter = await navigator.gpu?.requestAdapter();const device = await adapter?.requestDevice();if (!device) {alert('need a browser that supports WebGPU');return;}const canvas = document.querySelector('canvas');const context = canvas.getContext('webgpu');const presentationFormat = navigator.gpu.getPreferredCanvasFormat();context.configure({device,format: presentationFormat,});const module = device.createShaderModule({label: 'hardcoded textured quad shaders',code: `struct VertexShaderOutput {@builtin(position) position: vec4f,@location(0) texcoord: vec2f,};@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> VertexShaderOutput {let pos = array(vec2f( 0.0, 0.0), // centervec2f( 1.0, 0.0), // right, centervec2f( 0.0, 1.0), // center, topvec2f( 0.0, 1.0), // center, topvec2f( 1.0, 0.0), // right, centervec2f( 1.0, 1.0), // right, top);var vsOutput: VertexShaderOutput;let xy = pos[vertexIndex];vsOutput.position = vec4f(xy, 0.0, 1.0);vsOutput.texcoord = xy;return vsOutput;}@group(0) @binding(0) var testSampler: sampler;@group(0) @binding(1) var testTexture: texture_2d<f32>;@fragment fn fs(fsInput: VertexShaderOutput) -> @location(0) vec4f {return textureSample(testTexture, testSampler, fsInput.texcoord);}`,});const pipeline = device.createRenderPipeline({label: 'hardcoded textured quad pipeline',layout: 'auto',vertex: {module,entryPoint: 'vs',},fragment: {module,entryPoint: 'fs',targets: [{ format: presentationFormat }],},});async function loadImageBitmap(url) {const res = await fetch(url);const blob = await res.blob();return await createImageBitmap(blob, { colorSpaceConversion: 'none' });}const url = 'https://webgpufundamentals.org/webgpu/resources/images/f-texture.png';const source = await loadImageBitmap(url);const texture = device.createTexture({label: url,format: 'rgba8unorm',size: [source.width, source.height],usage: GPUTextureUsage.TEXTURE_BINDING |GPUTextureUsage.COPY_DST |GPUTextureUsage.RENDER_ATTACHMENT,});device.queue.copyExternalImageToTexture({ source, flipY: true },{ texture },{ width: source.width, height: source.height },);const sampler = device.createSampler();const bindGroup = device.createBindGroup({layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: sampler },{ binding: 1, resource: texture.createView() },],});const renderPassDescriptor = {label: 'our basic canvas renderPass',colorAttachments: [{clearValue: [0.3, 0.3, 0.3, 1],loadOp: 'clear',storeOp: 'store',},],};function render() {renderPassDescriptor.colorAttachments[0].view =context.getCurrentTexture().createView();const encoder = device.createCommandEncoder({label: 'render quad encoder',});const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);pass.setBindGroup(0, bindGroup);pass.draw(6);pass.end();const commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);}const observer = new ResizeObserver(entries => {for (const entry of entries) {const canvas = entry.target;const width = entry.contentBoxSize[0].inlineSize;const height = entry.contentBoxSize[0].blockSize;canvas.width = Math.max(1, Math.min(width, device.limits.maxTextureDimension2D));canvas.height = Math.max(1, Math.min(height, device.limits.maxTextureDimension2D));render();}});observer.observe(canvas);}main();

- Loads an image and gets an ImageBitmap from it

- Creates a texture with usage flags COPY_DST and RENDER_ATTACHMENT since they're required by copyExternalImageToTexture

- Use said function to copy the bitmap to the texture, the parameters are source, destination and size

Generating mips on the GPU

Changes to the mipmapFilter example:

const numMipLevels = (...sizes) => {const maxSize = Math.max(...sizes);return 1 + Math.log2(maxSize) | 0;};const texture = device.createTexture({mipLevelCount: numMipLevels(source.width, source.height),...});const generateMips = (() => {let sampler;let module;const pipelineByFormat = {};return function generateMips(device, texture) {if (!module) {module = device.createShaderModule({label: 'textured quad shaders for mip level generation',code: `struct VSOutput {@builtin(position) position: vec4f,@location(0) texcoord: vec2f,};@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> VSOutput {let pos = array(vec2f( 0.0, 0.0), // centervec2f( 1.0, 0.0), // right, centervec2f( 0.0, 1.0), // center, topvec2f( 0.0, 1.0), // center, topvec2f( 1.0, 0.0), // right, centervec2f( 1.0, 1.0), // right, top);var vsOutput: VSOutput;let xy = pos[vertexIndex];vsOutput.position = vec4f(xy * 2.0 - 1.0, 0.0, 1.0);vsOutput.texcoord = vec2f(xy.x, 1.0 - xy.y);return vsOutput;}@group(0) @binding(0) var testSampler: sampler;@group(0) @binding(1) var testTexture: texture_2d<f32>;@fragment fn fs(fsInput: VSOutput) -> @location(0) vec4f {return textureSample(testTexture, testSampler, fsInput.texcoord);}`,});sampler = device.createSampler({minFilter: 'linear',});}if (!pipelineByFormat[texture.format]) {pipelineByFormat[texture.format] = device.createRenderPipeline({label: 'mip level generator pipeline',layout: 'auto',vertex: {module,entryPoint: 'vs',},fragment: {module,entryPoint: 'fs',targets: [{ format: texture.format }],},});}const pipeline = pipelineByFormat[texture.format];const encoder = device.createCommandEncoder({label: 'mip gen encoder',});let width = texture.width;let height = texture.height;let baseMipLevel = 0;while (width > 1 || height > 1) {width = Math.max(1, width / 2 | 0);height = Math.max(1, height / 2 | 0);const bindGroup = device.createBindGroup({layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: sampler },{ binding: 1, resource: texture.createView({baseMipLevel, mipLevelCount: 1}) },],});++baseMipLevel;const renderPassDescriptor = {label: 'basic canvas renderPass',colorAttachments: [{view: texture.createView({baseMipLevel, mipLevelCount: 1}),loadOp: 'clear',storeOp: 'store',},],};const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);pass.setBindGroup(0, bindGroup);pass.draw(6);pass.end();}device.queue.submit([encoder.finish()]);};})();function copySourceToTexture(device, texture, source, {flipY} = {}) {device.queue.copyExternalImageToTexture({ source, flipY, },{ texture },{ width: source.width, height: source.height },);if (texture.mipLevelCount > 1) {generateMips(device, texture);}}function createTextureFromSource(device, source, options = {}) {const texture = device.createTexture({format: 'rgba8unorm',mipLevelCount: options.mips ? numMipLevels(source.width, source.height) : 1,size: [source.width, source.height],usage: GPUTextureUsage.TEXTURE_BINDING |GPUTextureUsage.COPY_DST |GPUTextureUsage.RENDER_ATTACHMENT,});copySourceToTexture(device, texture, source, options);return texture;}async function createTextureFromImage(device, url, options) {const imgBitmap = await loadImageBitmap(url);return createTextureFromSource(device, imgBitmap, options);}const textures = await Promise.all([await createTextureFromImage(device,'resources/images/f-texture.png', {mips: true, flipY: false}),await createTextureFromImage(device,'resources/images/Granite_paving_tileable_512x512.jpeg', {mips: true}),]);...

- numMipLevels calculates the number of mip levels needed by using log2 on the the biggest size, which returns the amount of times it can be divided by 2, and adds 1 to that

- Uses that number for the mipLevelCount when creating the texture

- Creates a function to generate mips by drawing textured quads and using minFilter: 'linear'

- Creates a helper function that loads an image and calls another one that creates a texture with it and calls another one to fill it with the data

- Creates some textures using these functions

Loading Canvas

HTMLCanvasElement can be used to draw things in a 2d canvas, and then get the result in a texture in WGPU. It can have its benefits since the 2d canvas has a relatively high level API.

Example animation:

const size = 256;const half = size / 2;const ctx = document.createElement('canvas').getContext('2d');ctx.canvas.width = size;ctx.canvas.height = size;const hsl = (h, s, l) => `hsl(${h * 360 | 0}, ${s * 100}%, ${l * 100 | 0}%)`;function update2DCanvas(time) {time *= 0.0005;ctx.clearRect(0, 0, size, size);ctx.save();ctx.translate(half, half);const num = 20;for (let i = 0; i < num; ++i) {ctx.fillStyle = hsl(i / num * 0.2 + time * 0.1, 1, i % 2 * 0.5);ctx.fillRect(-half, -half, size, size);ctx.rotate(time * 0.5);ctx.scale(0.85, 0.85);ctx.translate(size / 16, 0);}ctx.restore();}function render(time) {update2DCanvas(time);requestAnimationFrame(render);}requestAnimationFrame(render);document.body.appendChild(ctx.canvas);

Changes to the previous example to load that canvas into wgpu:

const texture = createTextureFromSource(device, ctx.canvas, {mips: true});const textures = await Promise.all([texture,]);function render(time) {update2DCanvas(time);copySourceToTexture(device, texture, ctx.canvas);...requestAnimationFrame(render);}requestAnimationFrame(render);const observer = new ResizeObserver(entries => {for (const entry of entries) {const canvas = entry.target;const width = entry.contentBoxSize[0].inlineSize;const height = entry.contentBoxSize[0].blockSize;canvas.width = Math.max(1, Math.min(width, device.limits.maxTextureDimension2D));canvas.height = Math.max(1, Math.min(height, device.limits.maxTextureDimension2D));}});observer.observe(canvas);

Loading Video

Loading a video is similar but uses the <video> element.

The size properties on HTMLVideoElement are called videoWidth and videoHeight.

Updates to the previous code to handle that difference:

function getSourceSize(source) {return [source.videoWidth || source.width,source.videoHeight || source.height,];}function copySourceToTexture(device, texture, source, {flipY} = {}) {device.queue.copyExternalImageToTexture({ source, flipY, },{ texture },getSourceSize(source),);...}function createTextureFromSource(device, source, options = {}) {const size = getSourceSize(source);const texture = device.createTexture({size,...});...}function startPlayingAndWaitForVideo(video) {return new Promise((resolve, reject) => {video.addEventListener('error', reject);if ('requestVideoFrameCallback' in video) {video.requestVideoFrameCallback(resolve);} else {const timeWatcher = () => {if (video.currentTime > 0) {resolve();} else {requestAnimationFrame(timeWatcher);}};timeWatcher();}video.play().catch(reject);});}function waitForClick() {return new Promise(resolve => {window.addEventListener('click',() => {document.querySelector('#start').style.display = 'none';resolve();},{ once: true });});}const video = document.createElement('video');video.muted = true;video.loop = true;video.preload = 'auto';video.src = 'resources/videos/example.webm';await waitForClick();await startPlayingAndWaitForVideo(video);canvas.addEventListener('click', () => {if (video.paused) {video.play();} else {video.pause();}});const texture = createTextureFromSource(device, video, {mips: true});function render() {copySourceToTexture(device, texture, video);...}

- A video needs to have started playing before it can be passed to wgpu,

this can be done with video.requestVideoFrameCallback in modern browsers,

and for a fallback for older ones wait for the time to advance

Optimization

Only update the video for each new frame but the texture at its own frame rate:

...await startPlayingAndWaitForVideo(video);let alwaysUpdateVideo = !('requestVideoFrameCallback' in video);let haveNewVideoFrame = false;if (!alwaysUpdateVideo) {function recordHaveNewFrame() {haveNewVideoFrame = true;video.requestVideoFrameCallback(recordHaveNewFrame);}video.requestVideoFrameCallback(recordHaveNewFrame);}function render() {if (alwaysUpdateVideo || haveNewVideoFrame) {haveNewVideoFrame = false;copySourceToTexture(device, texture, video);}...}

Texture Atlases

A texture atlas is an image containing multiple smaller images packed together to reduce overall dimensions. The texture coordinates can be used to select which parts go where.

This is alot more efficient than using multiple textures.

Example that creates a rotating cube and provides texture coordinates that select each portion of an image onto a specific face of the cube (3D stuff covered later):

import { mat4 } from 'https://wgpu-matrix.org/dist/2.x/wgpu-matrix.module.js';function createCubeVertices() {const vertexData = new Float32Array([// position | texture coordinate-1, 1, 1, 0 , 0 ,-1, -1, 1, 0 , 0.5,1, 1, 1, 0.25, 0 ,1, -1, 1, 0.25, 0.5,1, 1, -1, 0.25, 0 ,1, 1, 1, 0.5 , 0 ,1, -1, -1, 0.25, 0.5,1, -1, 1, 0.5 , 0.5,1, 1, -1, 0.5 , 0 ,1, -1, -1, 0.5 , 0.5,-1, 1, -1, 0.75, 0 ,-1, -1, -1, 0.75, 0.5,-1, 1, 1, 0 , 0.5,-1, 1, -1, 0.25, 0.5,-1, -1, 1, 0 , 1 ,-1, -1, -1, 0.25, 1 ,1, -1, 1, 0.25, 0.5,-1, -1, 1, 0.5 , 0.5,1, -1, -1, 0.25, 1 ,-1, -1, -1, 0.5 , 1 ,-1, 1, 1, 0.5 , 0.5,1, 1, 1, 0.75, 0.5,-1, 1, -1, 0.5 , 1 ,1, 1, -1, 0.75, 1 ,]);const indexData = new Uint16Array([0, 1, 2, 2, 1, 3,4, 5, 6, 6, 5, 7,8, 9, 10, 10, 9, 11,12, 13, 14, 14, 13, 15,16, 17, 18, 18, 17, 19,20, 21, 22, 22, 21, 23,]);return {vertexData,indexData,numVertices: indexData.length,};}async function main() {const adapter = await navigator.gpu?.requestAdapter();const device = await adapter?.requestDevice();if (!device) {alert('need a browser that supports WebGPU');return;}const canvas = document.querySelector('canvas');const context = canvas.getContext('webgpu');const presentationFormat = navigator.gpu.getPreferredCanvasFormat();context.configure({device,format: presentationFormat,alphaMode: 'premultiplied',});const module = device.createShaderModule({code: `struct Uniforms {matrix: mat4x4f,};struct Vertex {@location(0) position: vec4f,@location(1) texcoord: vec2f,};struct VSOutput {@builtin(position) position: vec4f,@location(0) texcoord: vec2f,};@group(0) @binding(0) var<uniform> uni: Uniforms;@group(0) @binding(1) var testSampler: sampler;@group(0) @binding(2) var testTexture: texture_2d<f32>;@vertex fn vs(vert: Vertex) -> VSOutput {var vsOut: VSOutput;vsOut.position = uni.matrix * vert.position;vsOut.texcoord = vert.texcoord;return vsOut;}@fragment fn fs(vsOut: VSOutput) -> @location(0) vec4f {return textureSample(testTexture, testSampler, vsOut.texcoord);}`,});const pipeline = device.createRenderPipeline({label: '2 attributes',layout: 'auto',vertex: {module,entryPoint: 'vs',buffers: [{arrayStride: (3 + 2) * 4, // (3+2) floats 4 bytes eachattributes: [{shaderLocation: 0, offset: 0, format: 'float32x3'}, // position{shaderLocation: 1, offset: 12, format: 'float32x2'}, // texcoord],},],},fragment: {module,entryPoint: 'fs',targets: [{ format: presentationFormat }],},primitive: {cullMode: 'back',},depthStencil: {depthWriteEnabled: true,depthCompare: 'less',format: 'depth24plus',},});const numMipLevels = (...sizes) => {const maxSize = Math.max(...sizes);return 1 + Math.log2(maxSize) | 0;};async function loadImageBitmap(url) {const res = await fetch(url);const blob = await res.blob();return await createImageBitmap(blob, { colorSpaceConversion: 'none' });}function copySourceToTexture(device, texture, source, {flipY} = {}) {device.queue.copyExternalImageToTexture({ source, flipY, },{ texture },{ width: source.width, height: source.height },);if (texture.mipLevelCount > 1) {generateMips(device, texture);}}function createTextureFromSource(device, source, options = {}) {const texture = device.createTexture({format: 'rgba8unorm',mipLevelCount: options.mips ? numMipLevels(source.width, source.height) : 1,size: [source.width, source.height],usage: GPUTextureUsage.TEXTURE_BINDING |GPUTextureUsage.COPY_DST |GPUTextureUsage.RENDER_ATTACHMENT,});copySourceToTexture(device, texture, source, options);return texture;}const generateMips = (() => {let sampler;let module;const pipelineByFormat = {};return function generateMips(device, texture) {if (!module) {module = device.createShaderModule({label: 'textured quad shaders for mip level generation',code: `struct VSOutput {@builtin(position) position: vec4f,@location(0) texcoord: vec2f,};@vertex fn vs(@builtin(vertex_index) vertexIndex : u32) -> VSOutput {let pos = array(vec2f( 0.0, 0.0), // centervec2f( 1.0, 0.0), // right, centervec2f( 0.0, 1.0), // center, top// 2st trianglevec2f( 0.0, 1.0), // center, topvec2f( 1.0, 0.0), // right, centervec2f( 1.0, 1.0), // right, top);var vsOutput: VSOutput;let xy = pos[vertexIndex];vsOutput.position = vec4f(xy * 2.0 - 1.0, 0.0, 1.0);vsOutput.texcoord = vec2f(xy.x, 1.0 - xy.y);return vsOutput;}@group(0) @binding(0) var testSampler: sampler;@group(0) @binding(1) var testTexture: texture_2d<f32>;@fragment fn fs(fsInput: VSOutput) -> @location(0) vec4f {return textureSample(testTexture, testSampler, fsInput.texcoord);}`,});sampler = device.createSampler({minFilter: 'linear',magFilter: 'linear',});}if (!pipelineByFormat[texture.format]) {pipelineByFormat[texture.format] = device.createRenderPipeline({label: 'mip level generator pipeline',layout: 'auto',vertex: {module,entryPoint: 'vs',},fragment: {module,entryPoint: 'fs',targets: [{ format: texture.format }],},});}const pipeline = pipelineByFormat[texture.format];const encoder = device.createCommandEncoder({label: 'mip gen encoder',});let width = texture.width;let height = texture.height;let baseMipLevel = 0;while (width > 1 || height > 1) {width = Math.max(1, width / 2 | 0);height = Math.max(1, height / 2 | 0);const bindGroup = device.createBindGroup({layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: sampler },{ binding: 1, resource: texture.createView({baseMipLevel, mipLevelCount: 1}) },],});++baseMipLevel;const renderPassDescriptor = {label: 'basic canvas renderPass',colorAttachments: [{view: texture.createView({baseMipLevel, mipLevelCount: 1}),loadOp: 'clear',storeOp: 'store',},],};const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);pass.setBindGroup(0, bindGroup);pass.draw(6);pass.end();}const commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);};})();async function createTextureFromImage(device, url, options) {const imgBitmap = await loadImageBitmap(url);return createTextureFromSource(device, imgBitmap, options);}const texture = await createTextureFromImage(device,'https://webgpufundamentals.org/webgpu/resources/images/noodles.jpg', {mips: true, flipY: false});const sampler = device.createSampler({magFilter: 'linear',minFilter: 'linear',mipmapFilter: 'linear',});const uniformBufferSize = (16) * 4;const uniformBuffer = device.createBuffer({label: 'uniforms',size: uniformBufferSize,usage: GPUBufferUsage.UNIFORM | GPUBufferUsage.COPY_DST,});const uniformValues = new Float32Array(uniformBufferSize / 4);const kMatrixOffset = 0;const matrixValue = uniformValues.subarray(kMatrixOffset, kMatrixOffset + 16);const { vertexData, indexData, numVertices } = createCubeVertices();const vertexBuffer = device.createBuffer({label: 'vertex buffer vertices',size: vertexData.byteLength,usage: GPUBufferUsage.VERTEX | GPUBufferUsage.COPY_DST,});device.queue.writeBuffer(vertexBuffer, 0, vertexData);const indexBuffer = device.createBuffer({label: 'index buffer',size: vertexData.byteLength,usage: GPUBufferUsage.INDEX | GPUBufferUsage.COPY_DST,});device.queue.writeBuffer(indexBuffer, 0, indexData);const bindGroup = device.createBindGroup({label: 'bind group for object',layout: pipeline.getBindGroupLayout(0),entries: [{ binding: 0, resource: { buffer: uniformBuffer }},{ binding: 1, resource: sampler },{ binding: 2, resource: texture.createView() },],});const renderPassDescriptor = {label: 'basic canvas renderPass',colorAttachments: [{loadOp: 'clear',storeOp: 'store',},],depthStencilAttachment: {depthClearValue: 1.0,depthLoadOp: 'clear',depthStoreOp: 'store',},};const degToRad = d => d * Math.PI / 180;const rotation = [degToRad(20), degToRad(25), degToRad(0)];let depthTexture;function render(time) {canvas.width = canvas.clientWidth * window.devicePixelRatio;canvas.height = canvas.clientHeight * window.devicePixelRatio;const canvasTexture = context.getCurrentTexture();renderPassDescriptor.colorAttachments[0].view = canvasTexture.createView();time *= 0.0006;if (!depthTexture ||depthTexture.width !== canvasTexture.width ||depthTexture.height !== canvasTexture.height) {if (depthTexture) {depthTexture.destroy();}depthTexture = device.createTexture({size: [canvasTexture.width, canvasTexture.height],format: 'depth24plus',usage: GPUTextureUsage.RENDER_ATTACHMENT,});}renderPassDescriptor.depthStencilAttachment.view = depthTexture.createView();const encoder = device.createCommandEncoder();const pass = encoder.beginRenderPass(renderPassDescriptor);pass.setPipeline(pipeline);pass.setVertexBuffer(0, vertexBuffer);pass.setIndexBuffer(indexBuffer, 'uint16');const aspect = canvas.clientWidth / canvas.clientHeight;mat4.perspective(60 * Math.PI / 180,aspect,0.1, // zNear10, // zFarmatrixValue,);const view = mat4.lookAt([0, 1, 5], // camera position[0, 0, 0], // target[0, 1, 0], // up);mat4.multiply(matrixValue, view, matrixValue);mat4.rotateX(matrixValue, rotation[0], matrixValue);mat4.rotateY(matrixValue, time * 0.5, matrixValue);mat4.rotateZ(matrixValue, rotation[2], matrixValue);device.queue.writeBuffer(uniformBuffer, 0, uniformValues);pass.setBindGroup(0, bindGroup);pass.drawIndexed(numVertices);pass.end();const commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);requestAnimationFrame(render);}requestAnimationFrame(render);}main();

Using Video Efficiently

Beside copyExternalImageToTexture, which copies the current frame from the video itself into a pre-existing texture,

there's also importExternalTexture which provides a GPUExternalTexture that represents the data in the video directly, no copy made.

Note: the texture is only valid until the current javascript task is exited,

in the shader, texture_external has to be used,

and since external textures cant have a mipmap, textureSampleBaseClampToEdge has to be used which only samples the base texture mip level 0

Changes to the previous video example: